What happens if we deliberately set aside the term “consciousness” in our thinking about AI and see what grows in the conceptual space it used to occupy? This project treats that as a structured experiment in conceptual engineering: Part I asks whether talk of “consciousness” is distorting philosophy, science, ethics, and public discourse about AI. Part II develops and tests alternative vocabularies drawn from cognitive science, AI practice, and diverse philosophical traditions. Our workshops explore arguments for abandoning “consciousness”, replacement vocabularies, and ask what genuinely new, non‑anthropocentric concepts might look like in theory, practice, and governance.

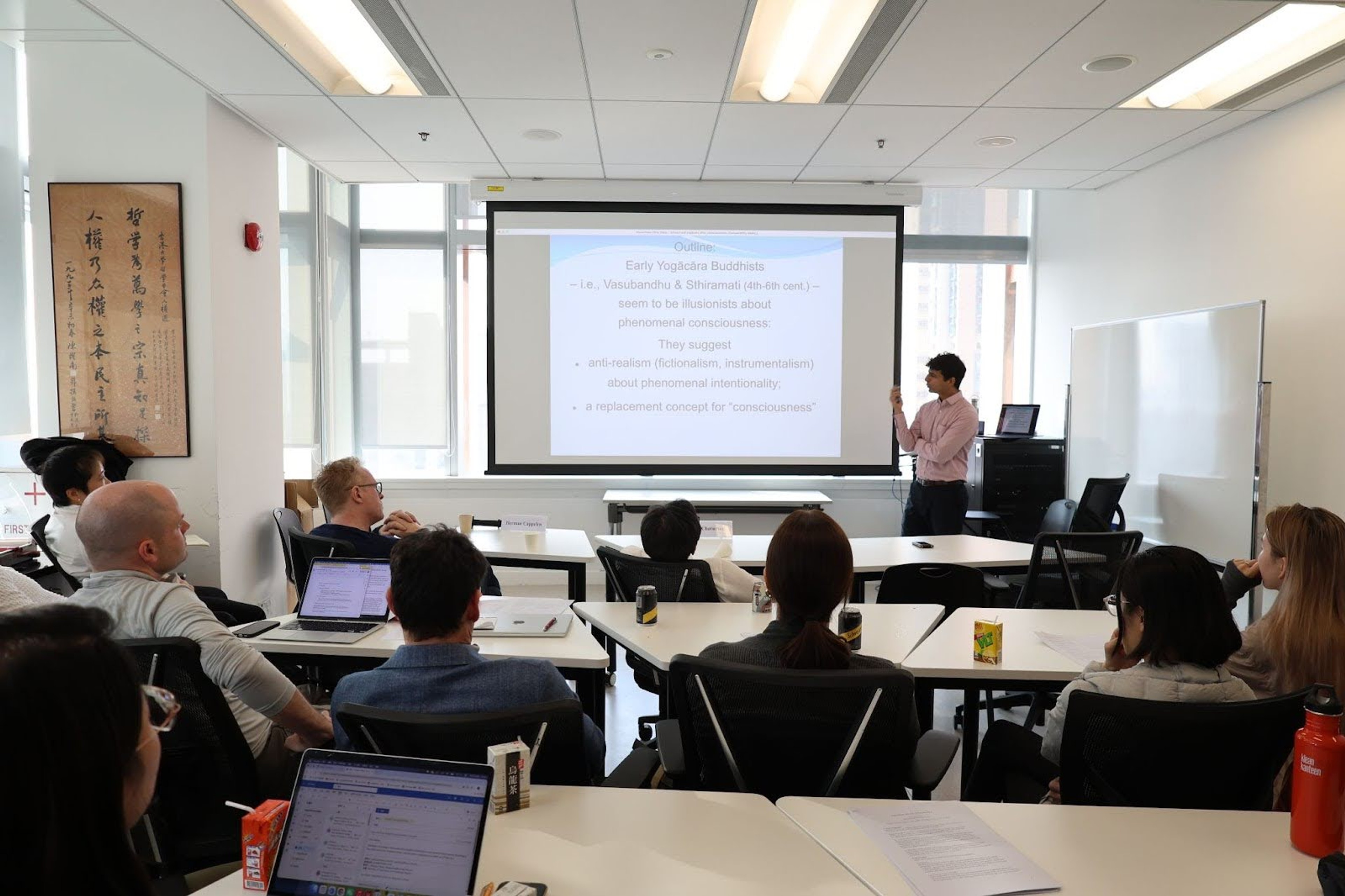

First Workshop

Led by Professor Herman Cappelen, a Fellow at the Berggruen Institute China, the inaugural workshop took place at the University of Hong Kong on January 27–28, 2026. Bringing together philosophers from China, the UK, Canada, Sweden, and the US, the event sought to address key questions surrounding the project. These included exploring its methodological foundations, defining what qualifies as "replacement," envisioning a genuinely new, non-anthropocentric vocabulary, identifying frameworks that foreground different facets of reality, and examining the role of non-Western conceptual resources.

Here is the detailed summary of the discussions.

Reactivity as Replacement: Re-engineering Consciousness for Science and Policy

Keith Frankish, University of Sheffield

Prof. Frankish argues that traditional understandings of "consciousness" not only generate an intractable "Hard Problem" of consciousness, but also fail to guide neuroscience research and AI-related policy. To address this, he proposes replacing "consciousness" with two concepts: "reactivity patterns" and "reactivity schemas."

Reactivity patterns are the collective neural responses to stimulation across multiple subsystems (perceptual, motor, affective, attentional, etc.) at a given moment. These patterns are substantial in that they enable the markers we associate with consciousness, such as verbal report, flexible behaviour, learning, and planning. Crucially, unlike phenomenal qualities, they are empirically measurable.

Reactivity schemas are simplified internal models created by the brain to monitor its own reactivity patterns. These schemas track ecologically salient dimensions such as valence, arousal, and attention, making information about our reactive states available for decision-making, communication, and reflective control. These schemas help explain why we mistakenly think that consciousness presents a Hard Problem. Accessing these schemas, our introspective system misrepresents complex reactivity patterns as simple phenomenal qualities, much as our visual system misrepresents patterns of reflection and refraction in damp, sunlit air as multicoloured arcs in the sky. In both cases, something real and scientifically explicable is automatically misrepresented as something strange and magical.

This framework transforms questions about AI consciousness. Instead of asking whether AI is conscious, we should ask: What reactivity patterns does the system exhibit? How rich and integrated are they? Does the system monitor its own reactivity? These questions are empirically tractable and can guide both research and policy. For policy, the framework enables graduated protections proportional to a system's reactivity profile, avoids metaphysical debates about consciousness thresholds, and allows for transparency requirements about system architecture.

By reconceptualizing consciousness in these terms, we can move beyond the traditional Hard Problem and focus on productive questions that merit joint investigation by philosophers, neuroscientists, AI engineers, and policymakers.

Alien Minds: The Case of Consciousness

Matti Eklund, Uppsala University

Prof. Matti Eklund’s lecture offers a philosophical exploration of “alien minds” and the nature of consciousness, Relating to his earlier work on the possibility of radically unfamiliar languages, Prof. Eklund applied the same kind of approach to the philosophy of mind, and specifically the question of consciousness.

In his earlier work, Eklund contrasted human natural language with the idea of a “thoroughly alien language” — a linguistic system entirely distinct from ordinary human linguistic structures — and here Eklund extends the discussion to a deep question relating to consciousness: might there consciousness-like phenomena distinct from consciousness, yet equally valuable? What are the prospects for a pluralist view according to which there are such alternative consciousness-like phenomena?

When addressing this question, Eklund distinguishes deflationary views according to which consciousness is not very special compared to other phenomena in the world, and non-deflationary views according to which conscious ness is special. Pluralism of the kind at issue is reasonable and plausible given a deflationary outlook. The harder question concerns the prospects of this kind of pluralism given a non-deflationary view. A more basic question concerns what this non-deflationary pluralism could even come to. One central difficulty Eklund stresses is that when we try to, from the first-person perspective, imagine alternatives to consciousness, we end up imagining only unfamiliar kinds of consciousness.

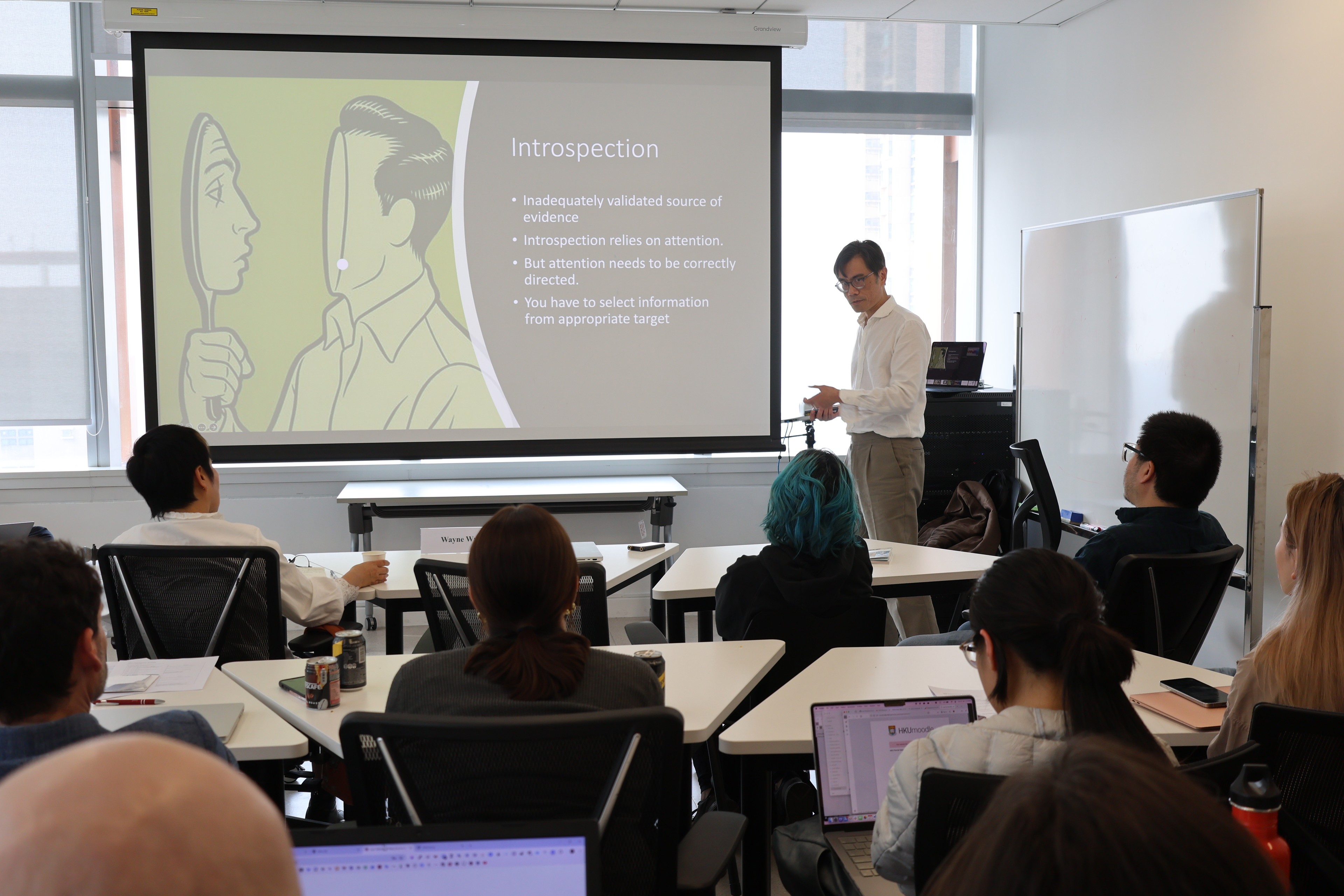

Access, Action, and Attention

Wayne Wu, University of Pittsburgh

Prof. Wayne Wu argues that advancing the study of consciousness requires drawing on empirical findings from neuroscience as well as introspection. However, he criticizes the assumption that introspection is always reliable and advocates explaining introspection and assessing its operation for reliability.

For example, since introspection is an action that depends on attention. Its evidential force depends on whether attention is properly directed at the relevant cognitive processing pathways. Through analyses of the primate visual system and classic neuroscientific cases such as the visual agnosic patient DF, Prof. Wu reexamines the empirical arguments surrounding unconscious vision. He contends that the key issue is not whether there can be “seeing without awareness,” but rather whether different kinds of visual information are available to the subject for the control of action.

On this basis, Prof. Wu proposes a triadic framework of Access, Action, and Attention. Consciousness, on this view, is not an independent intrinsic property; instead, it depends on an action-oriented form of access. We are conscious of what we access for guiding intentional action. Attention is understood as an agent’s action-guiding selection of a target in order to resolve problems of choice. Wu maintains that attention is a necessary condition for consciousness—though here consciousness is construed as a form that can be empirically investigated in terms of the notion of informational access to guide behavior. Phenomenal consciousness is really a question about something already actively studied in cognitive science, access of information for behavior (i.e., access consciousness). In fields such as AI research and animal studies, where questions about consciousness are increasingly prominent, this framework provides a foundation for substantive progress without obscurity. Indeed, significant advances have already been made within these domains.

A Bad Question: “Can AIs Be Conscious?”

Herman Cappelen (University of Hong Kong, 2025–2026 Berggruen Fellow)

During the group discussion session, Prof. Herman Cappelen argues in his talk that we should abandon the use of the term “consciousness” and related notions (such as “phenomenal consciousness” and “qualia”).

He advances three central claims. First, he distinguishes between metaphysical illusionism and linguistic illusionism: the former holds that consciousness is something that ought to exist but in fact does not; the latter maintains that the concept of “consciousness” itself is semantically indeterminate and lacks a clear referent, rendering the associated questions fundamentally unanswerable.

Second, he argues that the concept of “consciousness” lacks a stable semantic foundation. Since 1974, when Thomas Nagel introduces the notion of “phenomenal consciousness” in his essay What Is It Like to Be a Bat?, the phrase “what it’s like” in fact represents a misuse of an ordinary linguistic expression. Subsequent theories—such as those developed by Ned Block—are built upon this questionable basis, leaving the entire terminological framework on unstable ground.

Finally, he advocates adopting a pluralistic set of alternative vocabularies. Rather than debating whether “consciousness” exists, he suggests turning to the clearer substitute concepts offered by specific theories—such as the Higher-Order Thought (HOT) theory, Global Workspace theory, or recurrent processing models—and organizing research around these operationalizable and testable notions. Cappelen emphasizes that abandoning the term is not a radical move but a familiar strategy in the history of science and philosophy, one that helps break the current impasse and dispel empty disputes in consciousness studies.

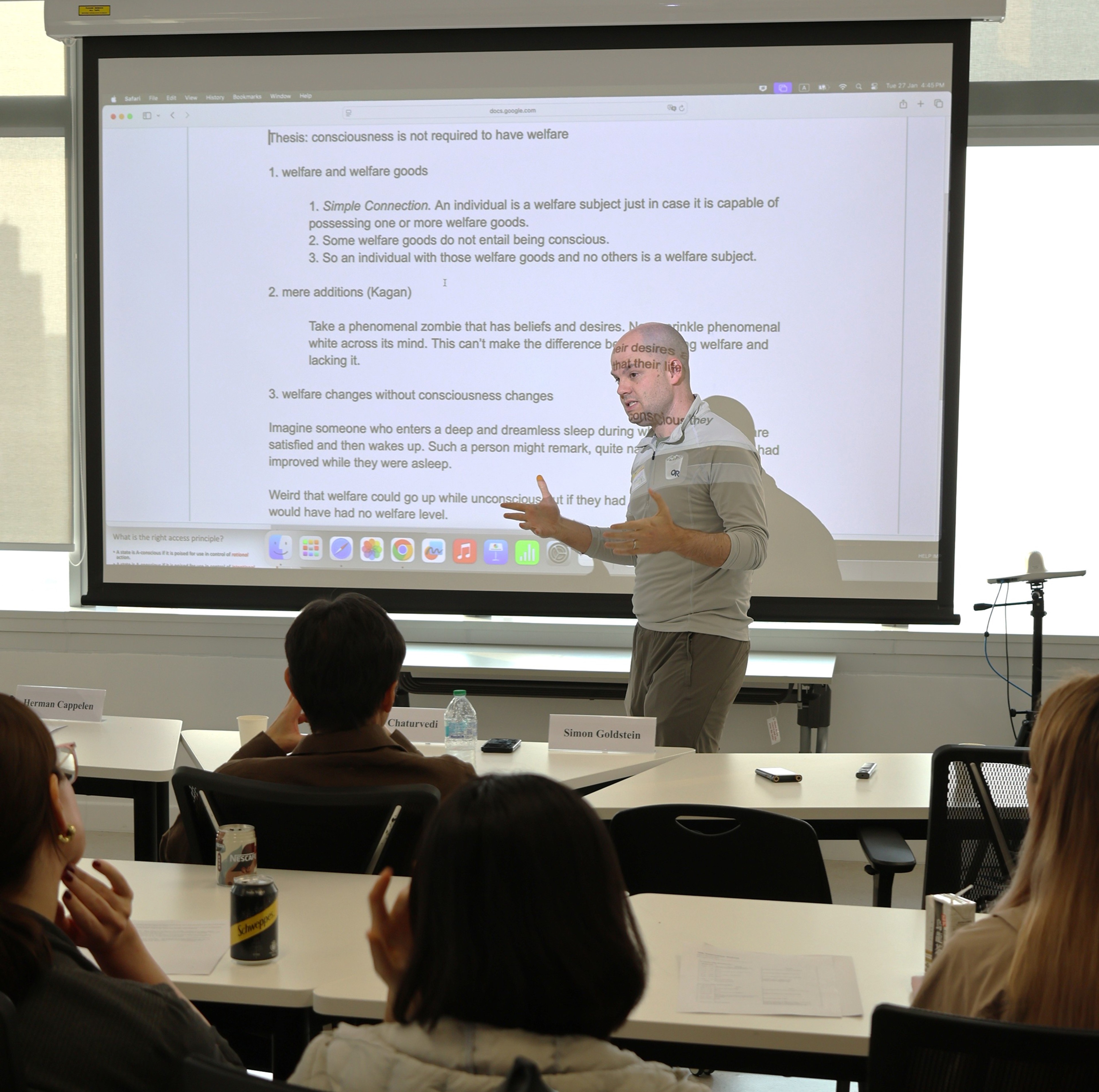

Consciousness is Not Required to Have Welfare

Simon Goldstein, University of Hong Kong

Prof. Simon Goldstein presents a counterintuitive core argument in his talk: consciousness is not necessary for possessing welfare.

He first argues via the notion of welfare goods: if an individual can have welfare goods, it counts as a welfare subject; some welfare goods—such as desire satisfaction—do not require consciousness. For example, when a person’s desires are fulfilled during dreamless sleep, they naturally feel their life is better upon waking—indicating that welfare can increase even in unconscious states.

Second, he appeals to the phenomenal zombie thought experiment: imagine an entity with beliefs and desires but no phenomenal consciousness. Adding phenomenal white—consciousness without content—should not change its welfare state, demonstrating that the content of consciousness does not have decisive impact on welfare.

Goldstein then proposes an alternative framework for moral status: moral concepts arise from solving coordination problems in evolutionary environments, rather than from consciousness itself. In a “natural state,” early humans develop three moral desires—doing good, reciprocal cooperation (and punishing defections), and imitation (to coordinate in assurance games)—to resolve resource conflicts. Moral concepts become bound to these desires, guiding blame, shame, and action to manage cooperation. He argues that AI moral status should not depend on consciousness but on social contracts: if cooperation with AI helps solve shared problems and it exhibits relevant moral attitudes (e.g., keeping promises), it should be granted moral status.

Goldstein acknowledges challenges to this social-contract view, as it may exclude animals or those incapable of action. Nevertheless, he emphasizes that in the AI era, we must move beyond the “consciousness myth” and assess moral status in terms of function and cooperative relationships.

What Comes After the Illusion of “Consciousness”? An Early Yogācāra Perspective

Amit Chaturvedi, University of Hong Kong

Prof. Amit Chaturvedi argues in his talk that the early Yogācāra school (represented by Asaṅga, Vasubandhu, and Sthiramati) adopts a profound form of illusionism regarding consciousness, strikingly similar to contemporary philosophical illusionist positions. He points out that the Buddha already compared consciousness to a “magical illusion,” and Yogācāra develops this idea further: what we experience as phenomenal consciousness—mental representations with subjective qualia and intentional content—does not truly exist, but is a cognitive illusion produced by conceptual constructions (vikalpa).

Chaturvedi explains that Yogācāra’s notion of mind-only (cittamātra) does not posit a real inner mind; rather, it holds that all subject-object appearances (such as those of external objects, the inner self, and mental representations) arise from abhūta-parikalpa—the imaginative fabrication of non-existent entities. This imagination is driven by latent tendencies (vāsanā) residing in the ālayavijñāna (storehouse consciousness), and is mistaken as involving the real experience of objects on account of introspective linguistic-conceptual activity. Thus, the intentionality of “phenomenal consciousness” is merely derivative and without any fundamental ontological status. Once this activity of conceptual projection ceases, as in the Buddha’s “non-conceptual gnosis” (nirvikalpa-jñāna), the illusion of experiencing mental appearances with phenomenal/representational contents may dissipate.

Ultimately, he suggests that Yogācāra may offer a “post-consciousness” approach in consonance with modern scientific instrumentalism, retaining the concept of “consciousness” as a practical tool for explaining suffering and liberation, while simultaneously revealing its unreal nature, so as to guide practitioners to awaken from attachment to subjective experience and thereby end the existential suffering it produces.

Caring and Consciousness

Edouard Machery, University of Pittsburgh

Prof. Edouard Machery argues in his talk that the core function of the everyday concept of “consciousness” is not to describe some inner subjective experience, but to mark whether an entity cares, thereby signaling whether it has moral status.

He first presents empirical research showing that ordinary people attribute consciousness to something primarily to decide whether it deserves moral concern. What people focus on is not whether the entity possesses complex reasoning, planning, or understanding abilities, but whether it can have genuine feelings. From this, he argues that lay understanding of consciousness functions as a proxy for “caring,” since an entity has moral status if and only if it can potentially suffer morally relevant harm.

Based on this, Machery advocates using “caring” as a successor concept to “consciousness.” He defines caring as an organism’s capacity to monitor relevant matters, approach or avoid them, and adjust behavior based on success or failure. He applies this framework to large language models (LLMs): although LLMs lack genuine feelings, through reinforcement learning alignment they exhibit goal-directed behavior resembling “desires” (e.g., generating responses humans consider meaningful) and show preliminary adaptability in contextual learning. Thus, LLMs may possess a very limited form of “caring.”

Machery ultimately argues that instead of debating whether AI is “conscious,” we should focus on whether it cares, and prudently evaluate its potential moral status accordingly.

On the Future of Consciousness

Andrew Lee, University of Toronto

Prof. Lee argues that the most promising direction for future consciousness research lies in shifting from essence questions(“what is X”) to structural questions (“how to model X”). This shift in the pattern of inquiry has played a pivotal role in the development of the natural sciences and now needs to be applied to the study of consciousness.

Lee argues that consciousness research has been dominated by what he calls the "Big Question:” the question ‘What *is* consciousness?’ This is an essence question that concerns how to delineate conscious entities from non-conscious entities. Lee points out that even if we answered the Big Question, many of the most interesting issues in consciousness research would remain unresolved, especially issues about the structures of conscious experiences.

To make progress, Lee advocates shifting consciousness research from essence questions to structural questions. He argues moreover that purely structural facts about conscious experiences are objective phenomenal facts, and that a shift from essence to structure would enable us to develop an objective phenomenology. According to him, a widely accepted structural description of consciousness would powerfully guide and unify future research in the field.

The Problem of Other AI Minds

Boris Babic, University of Hong Kong

Prof. Boris Babic argues in his talk that the debate over whether artificial intelligence possesses consciousness (AIC) is, in principle, irresolvable, and therefore we should not be fixated on it.

He first points out that the problem of other minds is particularly thorny in the AI context. We cannot establish AIC through analogical reasoning (e.g., Millian-style inference) because AI lacks human-like bodies, neural substrates, and causal mechanisms for behavior. Nor can we confirm or refute AIC via inference to the best explanation (IBE), the Turing Test, or its variants (such as the ACT), since AI behavior can be fully explained by its design as a statistical prediction model without invoking any consciousness hypothesis.

Babic further analyzes that even appealing to specific theories of consciousness—such as Global Workspace Theory or Higher-Order Thought Theory—fails to generate consensus, because these “theory-heavy” approaches themselves diverge. Meanwhile, “theory-light” methods—listing indicator properties, each considered necessary for consciousness by one or more theories, with certain subsets deemed jointly sufficient (Butlin, 2023) and supported by neuroscience—only apply to biologically human-like systems and cannot be extrapolated to AI.

Thus, whether one supports or denies AIC, it is difficult to persuade those holding the opposite view. Babic ultimately echoes the conference theme, advocating abandoning the fixation on AIC and instead focusing on the actual capacities and behaviors exhibited by AI, while remaining cautious that “post-consciousness” discussions do not merely recycle old debates under new terminology.

AI as Mirror

Lu Qiaoying, Peking University, 2020-2021 Berggruen Fellow

Prof. Lu Qiaoying argues that current discussions of artificial intelligence consciousness often presuppose a symmetry between AI and humans, treating AI as a potential “subject with inner experiences.” This, she notes, overlooks a fundamental fact: AI systems are derivative artifacts trained within human language and norms. A more appropriate approach is to understand contemporary AI as a mirror of human consciousness, rather than as a new conscious subject.

Lu contends that contemporary AI can reflect the structural patterns of human consciousness in language, value judgments, and practical reasoning. The “sense of understanding” and value biases exhibited by large language models do not indicate intrinsic machine consciousness, but rather manifest collective human consciousness and social-historical structures through a technological medium. Drawing on discussions in the philosophy of biology regarding DNA semantics, AI can be analogized to a “recording device”: just as DNA stores successful evolutionary paths in purely syntactic form, acquiring meaning only within living systems, AI attains significance only within the “AI–human relational system.” In the “post-consciousness” era, consciousness should be repositioned within human practices of meaning-making, interpretation, and evaluation, providing a conceptual framework for engaging with artificial intelligence.

Replacing Consciousness: Reduction or Elimination?

Zhan Yiwen, Beijing Normal University

Prof. Zhan Yiwen explores the semantic and metaphysical difficulties faced by phenomenal consciousness when it cannot be reduced to more fundamental physical properties. Within a framework that presupposes an objectified meta-ontology and rejects substance dualism, Zhan argues that, absent some dogmatic foundational theory, the most plausible explanatory path is to understand phenomenal consciousness as “a collective property jointly instantiated by multiple entities.”

Using the formal framework of structured plural semantics, he further argues that the multiple realizability of higher-order properties is closely tied to the complexity of the internal structure of the corresponding exemplifying entities. However, because monadic predicates cannot precisely capture the intricate internal structure of objects, the predicate “has phenomenal consciousness”—a paradigmatic case of a monadic predicate with special multiple realizability—makes semantic analysis insufficient to provide a unified and clear metaphysical account. In other words, unless one presupposes a more expansive ontological framework, metaphysical discussion of phenomenal consciousness, particularly regarding its reducibility, is likely to be futile.

Bridging Core Concepts in Eastern and Western Philosophy

Song Bing, Berggruen Institute

In the summary section, Song Bing recollects the main conclusions of last year’s workshop on the concept of “consciousness” held at University of Oxford, part of the “Dialogues Integrating Fundamental Concepts of Eastern and Western Philosophy” series. She briefly traces the intellectual history of Western philosophical concern with consciousness, noting that the concept of “consciousness” used to describe the subjective inner world of individual persons first appears in published works in 1690, in John Locke’s An Essay Concerning Human Understanding. Locke employs the concept of consciousness to establish the continuity of personal identity: it is through coherent consciousness that the past self and present self are continuous, allowing the present self to reflect upon the past self. Locke does not rely on the traditional concept of the “soul” to construct personal identity continuity, avoiding a conflation of his new theory of knowledge with theology.

Song introduces a cross-cultural approach to examining the concept of consciousness, proposing a study of the Vedas and Upanishads, as well as Buddhist, Daoist, and Confucian classics, to analyze whether these texts employ concepts analogous to Locke’s notion of consciousness; if not, what alternative concepts are used to describe human mental activity.

In the Vedas and Upanishads, the term Atman represents a concept approximating the “self” or “cosmic self,” emphasizing that individuals gain insight into the world by observing, hearing, and reflecting upon the self. The individual understands Atman through relationality. Although Hindu texts mention the individual’s inner subjective world, it is not considered the ultimate reality; Atman is the fundamental reality, and reflection on the personal inner world serves to realize Atman’s existence.

In Buddhism, Citta denotes mind, perception, and thought, closely linked to sensory experience. Similar to Hindu thought, Citta is not the ultimate reality but an illusion to be reflected upon and transcended.

The Daoist worldview situates all beings within an integrated cosmos, emphasizing relationality and continuity of existence. It affirms the existence of human mental activity but considers it always connected to bodily experience and sensory perception. Body and mind are interdependent, and the world is intimately tied to nature. Crucially, the human self should seek unity with the Dao.

Confucianism likewise interprets the self relationally, emphasizing the individual’s connection with family and society. Reflection is directed toward moral self-cultivation rather than theorizing about the origin or mechanisms of inner personal experience.

Song concludes that although attention to the concept of consciousness may appear universal and long-standing, within the history of philosophy it should be regarded as an intellectual creation specific to particular historical and cultural contexts, and must be understood within its historical and conceptual milieu. Moreover, it is essential to recognize the diverse approaches different cultures take to human mental activity, as well as the ontological, teleological, and moral assumptions underlying each approach.